SAT-Physical Thermodynamic Framework: treating constraints as a thermal system

GitHub’s 404 page presents the full site navigation and footer links, while noting that the requested action cannot be performed at this time.

Front-page articles summarized hourly.

GitHub’s 404 page presents the full site navigation and footer links, while noting that the requested action cannot be performed at this time.

Amazon workers use a Slack channel to meme about the company’s faulty AI tools, calling the outputs “slop” and joking about efforts to get employees to use AI. The piece contrasts Bezos’s expectation of AI-driven productivity with the employees’ skepticism and frustration over the AI's quality.

FCC proposals would require wireless providers to obtain and retain the name, physical address, government-issued ID number, and an alternate phone number for all new and renewing customers before service. The aim is to deter scammers and aid law enforcement, potentially creating a nationwide ID registry and making burner phones effectively impossible. Privacy advocates warn this undermines anonymity, harms abuse survivors and journalists, and may not curb fraud. Industry groups push back. The rulemaking is open for comments until June 25, 2026.

Could not summarize article.

New Biff 2 is being split into twelve libraries; first release is biff.core, which handles system composition and interfaces that hold the glue for all libraries. Biff’s modules/components structure is retained: each module exposes maps that are merged into a system map at startup. biff.core adds init functions that take a modules var and return the map to merge, stored under :biff.core/init. This enables late-binding-like behavior: you can update handlers without restarting by exposing them as functions (e.g., :com.example/get-thing). The design favors a simple, linear lifecycle over a full dependency graph, with examples like turning :routes into a :biff/handler.

The post explains Kish’s effective sample size, n_eff = 1 / sum alpha_i^2, where alpha_i are normalized importance weights p(x_i)/q(x_i). Reweighting to correct covariate shift reduces bias but increases variance as a few observations dominate. Two derivations show n_eff equals the sample size of an equivalent unweighted average: (1) variance of a weighted mean of normals, (2) Hoeffding’s inequality for bounded variables. In practice, n_eff governs information in replay buffers for off-policy RL; as weights concentrate and n_eff drops, it guides calibration (P3O, FeynRL) and resampling in SMC.

Guide to deliberately corrupting a ZFS file to study self‑healing and scrub reporting. It pits a quick zinject checksum fault against a meticulous, file‑backed approach that traces a file byte to a disk block via inode/dnode, DVA, and vdev_RAIDZ mapping. Using two pools of files, the author writes a 1 MB test file, inspects with zdb, and learns compression can disguise data; later exporting the pool and overwriting a sector shows a scrub detecting the corruption. In RAIDZ2, parity allows repair, highlighting that redundancy enables healing and that the real data path—from file to disk—matters.

WWDC 2026 centered on folding software and hardware via an origami demo that guides users through crease, fold, and shape steps. In iOS 27 beta, developers spotted foldState, angleDegrees, and a display-count API, signaling multi-display awareness. Apple pushed the PSOTU message to design for a dynamic range of screen sizes and aspect ratios, not a single device, and unveiled the Resizable iOS Simulator and enhanced iPhone Mirroring for landscape layouts. Rumors place a foldable iPhone Ultra (~7.7–7.8” inner, ~5.3–5.5” cover, ~$2,000) launching with iPhone 18 Pro in Sept, led by incoming CEO John Ternus.

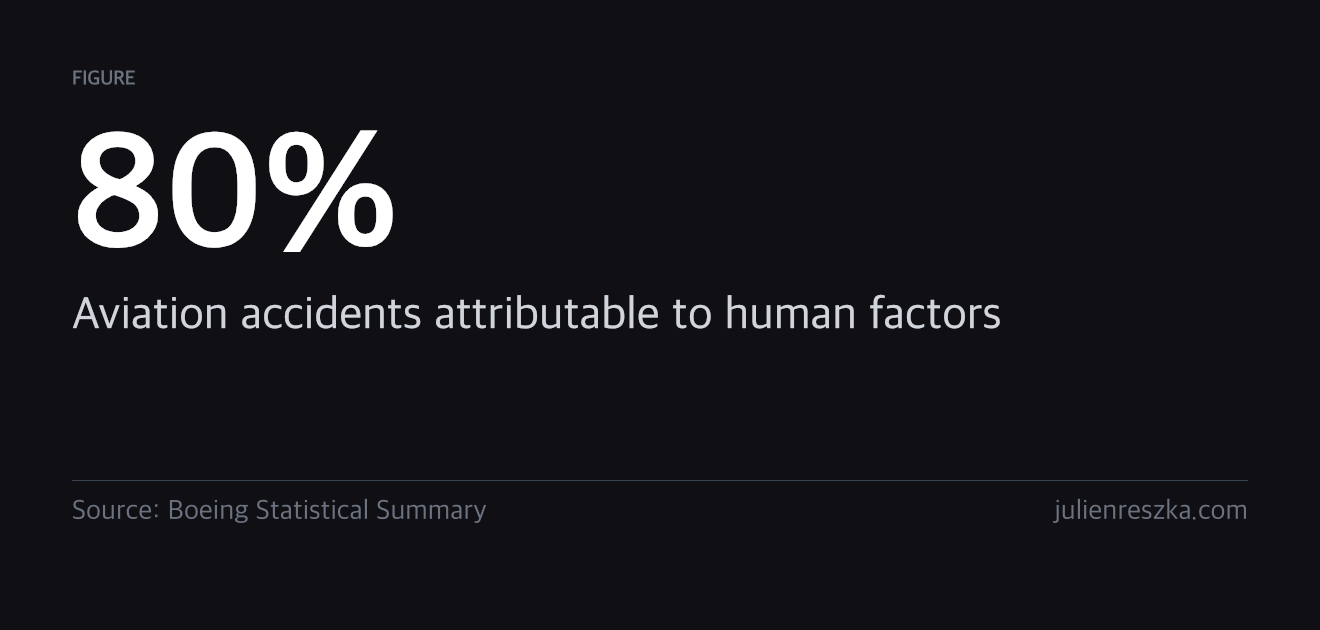

Automation doesn't make operators safer; it makes them forget how to act. As automation becomes more reliable, human monitoring declines, producing automation-induced complacency and reduced readiness for failures. The paradox is that more capable automation can worsen risk because operators aren't prepared for rare faults. Countermeasures: identify the critical tasks turned over to automation and regularly practice them manually; keep practice intervals short enough to prevent skill decay. Examples from aviation note manual hours for pilots, chaos drills, and game days as ways to maintain readiness.

Anti-corruption prosecutors froze assets of Albania Land Development, linked to Kushner-affiliated Affinity Partners, as protests over a US-backed resort on Sazan island and the Vjosa-Nartha wetlands enter a seventh day. The two-part plan—Sazan’s 1.4B euro Aman eco-resort and a mainland development—could reach about $4–5B, backed by Gulf funds (notably Saudi PIF). Critics say 2024 changes reclassified protected land to enable the project; officials accuse opponents of blocking progress. The EU ties the project to enviro- and rule-of-law benchmarks; SPAK opened a case into land-title changes. Protests, environmental concerns, and EU timing shape Albania’s future.

A gravity-based solar system model by qunabu, featuring an Earth texture from Solar System Scope, licensed CC BY 4.0.

Apple's dsymutil adopts a parallel DWARF linker to deduplicate types across compilation units, enabling self-contained dSYMs for crash reports while greatly speeding up creation of debug info. Because parallel linking isn’t binary-identical to the classic linker, a semantic DWARF diffing tool was developed to compare outputs, and determinism was fixed by giving canonical DIEs a priority based on link order. A full qualification, including LLDB tests, is required before switching the default. The result speeds clang dsymutil from ~3 minutes to ~40 seconds when full debug info is used.

The piece contrasts “rockstar” developers with AI-driven coding. Rockstars rush, rewrite, and produce impressive code that others struggle to understand; after they leave, teams face tangled, hard-to-follow code and debt. With AI rockstars, the problem intensifies: fast, vast code generation across disparate chats, poor system coherence, and dependency on LLMs that raise the bar but reduce clarity. The author advocates deliberate, craftsmanship-led approaches: guide LLMs, generate small, understandable snippets, avoid over-engineering, slow down for quality, and preserve human-led design.

Emerge Career, a YC-backed workforce platform for justice-impacted individuals, is hiring an AI-forward Growth Marketer in New York. Salary $130k-$165k, equity 0.10%-0.50%, full-time, relocation to NYC; US citizen/visa. Own student acquisition and build automated AI-powered marketing workflows, managing channels (SEO, paid search, OOH, partnerships, content) and end-to-end attribution across paid, organic, field, and partner channels. Requirements: 5+ years growth marketing with deep channel expertise, end-to-end ownership, AI tooling experience; comfortable with the mission and audience (low-income, formerly incarcerated). Bonuses for partnerships, ed-tech, field marketing. Paid work-trial interview.

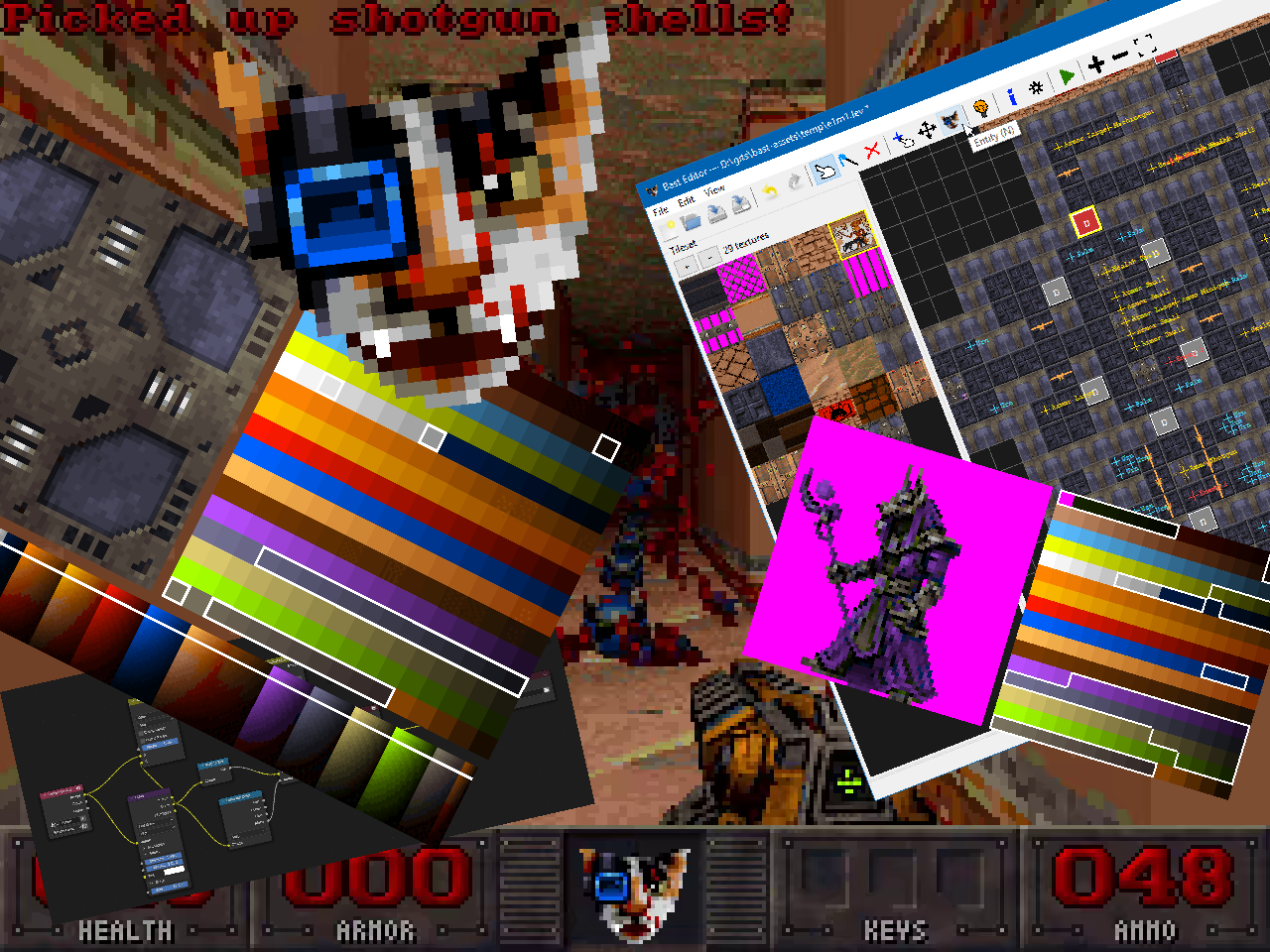

Catlantean 3D is Marko Stanic’s side project: a full, ship-ready first-person shooter in the spirit of 1990s VGA raycasting (320x240, 256 colors) using a modern toolchain. Constraints include hand-made assets, fixed-point gameplay, palette-based rendering with a 32-level colormap for lighting, and a minimal platform layer. Asset pipelines cover: Blender pre-rendered sprites with palette quantization (Oklab), hand-drawn sprites/textures, procedurally generated textures, gibs, and pre-baked particle systems; plus a custom wxPython map editor with pybast. Release planned for Q1 2027 at $5–$8; source available but data archive sold; transparency emphasized.

Giovanni Dicanio argues for a simple C++ function signature that takes a memory blob: void DoSomething(const void* p, size_t numBytes). He contrasts this with modern alternatives (const uint8_t*, std::span, or templated spans) which he says complicate calls and force casts when dealing with non-byte structs (e.g., DoSomething(&data, sizeof(data)) vs reinterpret_cast). He advocates keeping void* and a size parameter for readability and practicality, and suggests adding SAL annotations like _In_reads_bytes_(numBytes) to aid static analysis without sacrificing simplicity.

Thompson compares Microsoft’s vaporware-laden Project Solara with Apple’s Siri ambitions, arguing the future is agents: long-running tasks handled server-side, with thin-client devices as portals. Microsoft frames this as an enterprise play; Apple leans on the iPhone’s central role to anchor personal context. Apple’s Siri AI demo at WWDC shows deeper world knowledge, image understanding, and cross-app actions via App Intents, anchored by private cloud compute and a 20B-parameter on-device model. Consumers crave entertainment, not productivity, so Apple emphasizes safe, useful, device-bound context. Enterprise use may drive real value; Siri remains pivotal, not vaporware.

GentleOS/32 is a hobby operating system for vintage 32-bit PCs, intended for tinkering with retro hardware and running graphical interactive apps on bare metal. It requires an i386 CPU, 4 MB RAM, and a VGA display (640x480x16). It is monolithic and largely configured at compile time, supporting VGA/SVGA, keyboard, PS/2 mouse, serial mouse, and PC speaker. A 16-bit spin-off, GentleOS/16, targets 80186 devices. See USAGE.md for building and running. Licensed GPLv2.

H2JVM is a Haskell library for generating JVM bytecode, handling stack map analysis and label resolution to ease code generation. The post shows building a Calculator.add method and a sample IR for a greater-than operation, illustrating automatic label/offset handling. The project is early-stage and seeks feedback; GitHub: ElaraLang/h2jvm. Replies discuss addAccessFlag semantics, MonadFix/mdo for labels, list representations (DList vs normal lists), and API design choices. Plans include a higher-level interface (setPublic) and possibly enforcing MonadFix, while keeping an escape hatch for manual emission. Used by the author’s JVM-targeted language (e.g., Minecraft plugins).

Made by Johno Whitaker using FastHTML